Prompting, Not Programming

Last week I posted my first open source project to Reddit — specifically to r/audiobookshelf, a community dedicated to a self-hosted audiobook server called Audiobookshelf. If you manage your own audiobook files — ripped CDs, DRM-free purchases, things you’ve recorded yourself — Audiobookshelf is a self-hosted tool that organizes and serves them. Audible, but you own it. The subreddit is where its users share tools, ask questions, troubleshoot Docker configurations, and occasionally announce new projects.

Reddit, if you are somehow unfamiliar, is a collection of these communities — some enormous, some tiny and specialized. Each one has its own culture, its own regulars, its own trolls, its own norms. The audiobookshelf community is small and technical. People there know what M4B files are, care about chapter metadata, and have opinions about ffmpeg. It is also, like much of Reddit, a place where anonymous commenters can be blunt to the point of cruelty — and where the line between legitimate criticism and reflexive dismissal is often hard to find.

I posted m4Bookmaker, a desktop app that converts folders of MP3s into properly chaptered M4B audiobook files. It’s open source and free on GitHub. I built it because the existing tools were broken or abandoned, and because I’d been managing my own audiobook collection for twenty-five years and was tired of fighting with custom scripts every time I wanted to fix a chapter.

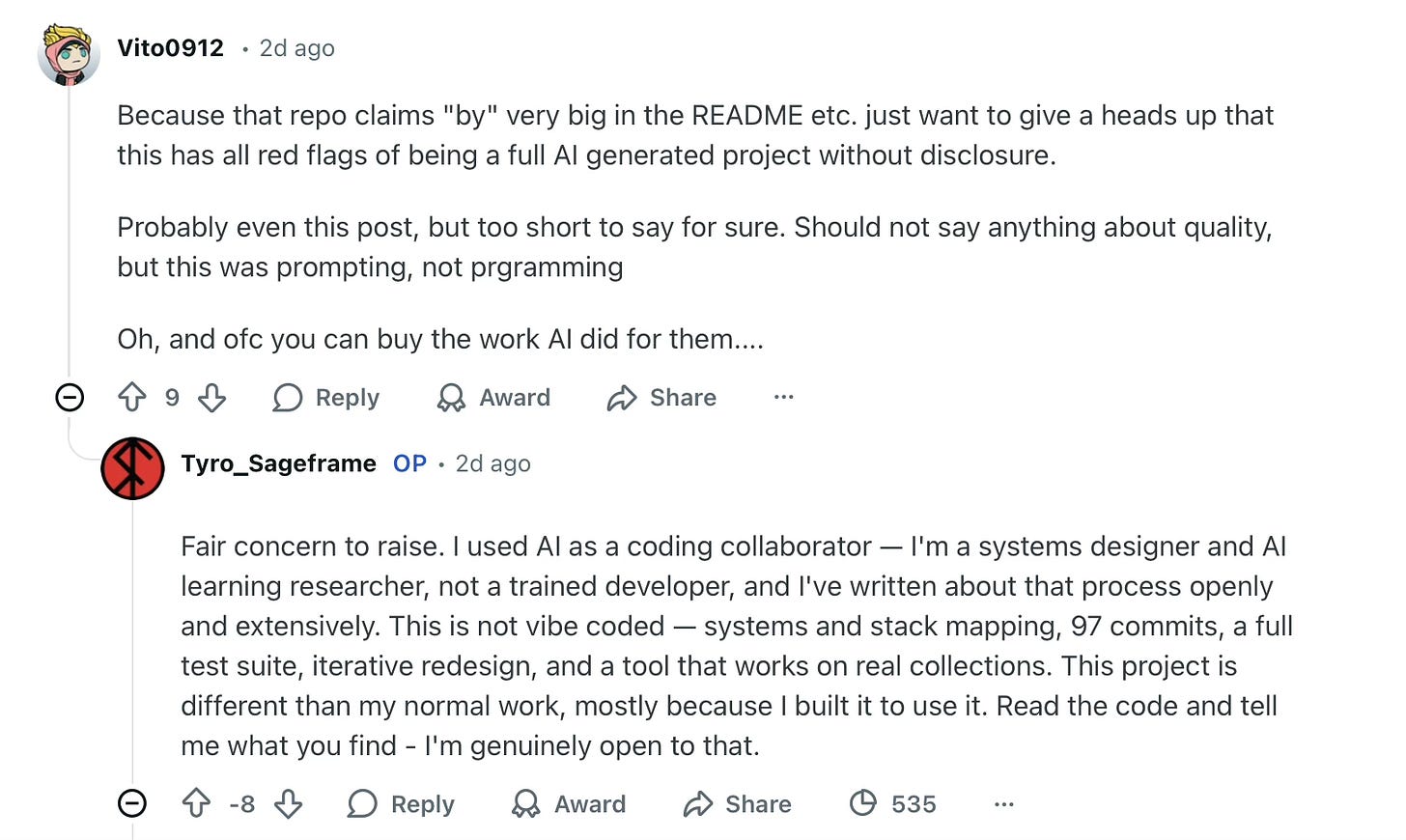

Within the hour, a commenter wrote:

Because that repo claims “by” very big in the README etc. just want to give a heads up that this has all red flags of being a full AI generated project without disclosure.

Probably even this post, but too short to say for sure. Should not say anything about quality, but this was prompting, not programming.

Oh, and ofc you can buy the work AI did for them....

I responded. I said I’d used AI as a coding collaborator — that I’m a systems designer, not a trained developer, and that I’ve written about the process openly and extensively. I pointed to the 97 commits, the full test suite, the iterative redesign. I said: read the code and tell me what you find. I’m genuinely open to that.

Their reply:

And even that comment is AI generated. Wow. Also if you are not a dev, this is exactly vibe-coding. Just creating a product by promoting.

I stared at this for a while.

It stung. I won’t pretend otherwise. This is my first professionally released piece of software. I spent real time on it — not just the code, but the GUI (my first), the Apple-signed and notarized package (my first), the website, the distribution system, the documentation. I used a structured development methodology I designed over fourteen months, tested fully on two previous projects. I know how every layer works because I made every architectural decision. The project went from “I’m annoyed that the existing software silently fails” to shipped product in three days — not because I skipped the hard work, but because the hard work had already been done, over months of developing the knowledge, skills, and process that made three days possible.

And someone glanced at the repo, saw AI fingerprints, and dismissed the whole thing in three sentences.

What settled in after the sting wasn’t really about this person. It was about a world that has decided the only question worth asking about AI-assisted work is a binary: Did a human make this, or did a machine make it? As if those are the only two options. As if there is no territory between “I typed every character by hand” and “I prompted and shipped whatever came back.” As if the process, the judgment, the decisions, the verification, the architecture didn’t matter at all. So much for nuance.

And then came recognition. I’ve been writing about exactly this problem for weeks, and I just slammed into it face-first, like the time I was reading a book and walked into a column.

I am, by the way, aware that “developer writes essay about receiving criticism on Reddit” is its own genre, and not always a flattering one. This isn’t that. Or if it is, at least the code actually works.

Here’s the thing about r/audiobookshelf: the commenter is not wrong about the landscape. In the last two months the subreddit has been flooded with AI-generated projects — low-quality apps that claim features they don’t deliver, repos that are clearly prompt-and-ship, with no tests, no documentation, no evidence that anyone understood the code before releasing it. This commenter has been calling them out for months, and the community has generally agreed. “And... again a vibe-coded/heavy AI project” — eleven upvotes. The crusade is not entirely unjust.

They’re right about the slop. The pattern of prompt-ship-abandon is real. The erosion of trust in the community is real.

And then I walked in with a project that looks, from the outside, exactly like the thing they’ve been fighting. AI fingerprints in the code. A non-developer as the author. A paid download alongside the open source release. Every red flag on their checklist.

From their perspective, I’m just another one. I understand that.

From my perspective, I am the living, breathing evidence that their checklist is broken

I have a structured process-based methodology for building software with AI in which the human makes every design decision. The AI always acts under clear intention and direction, and the human follows along with both reasoning and the actual code. There is human verification at every step — linting, testing, review, commit. I’ve been building and refining this approach for over a year, across three projects of increasing complexity. A computer vision system for wildlife monitoring. A backup orchestrator. m4Bookmaker was the third.

I didn’t sit down and say “make me an audiobook app.” I sat down because the abandoned tool had silently failed on a 45-minute encode for the third time that week, and I thought: I know what this tool needs to do. I know the audio pipeline. I’ve been managing this collection since 1999. I have the methodology to build it.

Three days later, it was shipped, and not because AI wrote it while I watched. Because I had spent months developing the capacity to specify precisely, evaluate critically, verify systematically, and decide architecturally. Is this prompting? I guess, in the same way that conducting is waving a stick. The Ho System is the interpreter — the slow, disciplined practice that makes the fast work possible. The three-day build was the compiler running, not the bootstrap being skipped.

The project has 97 commits (checkpoints in the code). Not 97 prompts — 97 clear decision points where I reviewed output, evaluated it against the architecture, accepted or rejected or revised, and committed with a descriptive message. There are 571 test functions across 21 test files — 340 for the core logic, 231 for the GUI. Code coverage is 90%. The ten modules that handle the pure logic — chapters, metadata, scanning, CLI, the M4B editor — are at 100%. The modules that aren’t are the ones that depend on platform-specific paths, Qt event loops, and live media playback — the things that are genuinely hard to test in a headless environment. I know which lines are uncovered and why. I didn’t type every test function — I specified what to test, demanded coverage targets, reviewed the output, ran the suite, and held the standard until it passed. That’s not a confession. That’s how testing works in a managed project. A senior engineer at a company doesn’t write every test either. The difference between me and a vibe coder isn’t who typed the test. It’s that I demanded the tests exist, understood what they verify, and used them as a quality gate.

That is not vibe coding.

I know how the ffmpeg pipeline works. I know why the encoding settings are what they are. I know why the chapter editor uses a QMediaPlayer instance tied to a table widget with click-to-seek. I know what happens when a damaged MP3 header hits the analyzer and why the repair step has to run before the concat. I know these things because I made the decisions that produced them, verified they worked, and can explain them to anyone who asks.

I chose Python over Swift because I wanted the tool to run on Mac and Windows — something that doesn’t exist even in paid alternatives. I chose PyQt for the GUI because it’s cross-platform and well-documented. I chose GPL-3.0 because if someone improves the tool, that improvement stays open. These are design decisions, not prompts.

“If you are not a dev, this is exactly vibe-coding.”

This sentence is the one that stayed with me, because it reveals the real issue, a deeper trap that is not about my project at all.

A developer, in this person’s framework, is someone who came up through a specific pipeline: learned to code, writes code by hand, understands code at the syntactic level. If you didn’t come through that pipeline, you’re not a developer. And if you’re not a developer but you shipped code, then by definition the AI did the real work and you’re just a prompter wearing a developer costume.

This is an identity argument, not a quality argument. It’s not “your code is bad” — they explicitly said they weren’t commenting on quality. It’s “you don’t belong to our guild, therefore your work can’t be real.” The code could be flawless and it wouldn’t matter, because the objection isn’t about the code. It’s about who made it.

I understand this defensiveness. I do. If you spent years learning to write code — the hard, tedious, interpreter-stage work of developing fluency — and then someone walks in and ships a comparable product in three days using a tool that didn’t exist when you were learning, that feels threatening. It feels like your investment has been devalued. It feels like someone skipped the line.

Nobody writes code from nothing. Every developer builds on libraries, frameworks, Stack Overflow answers, autocomplete, and the accumulated work of thousands of people they’ll never meet. The question was never whether you used tools. The question was whether you understood what you built.

But here’s what that framing misses: I didn’t skip the line. I stood in a different line. I spent seven months developing a methodology before I wrote a line of code. The three-day build was the output of that investment, not a substitute for it. The skills are different from a traditional developer’s skills. The judgment is the same.

A developer is someone who develops — who takes a problem, designs a solution, builds it, tests it, ships it, and can explain every decision they made along the way. The tools they use to get there are not the definition. They’re the means.

I want to be careful here, because there’s a trap on this side too. I don’t want to say “you’re not a real developer unless you can explain every line of code.” That’s just the commenter’s gatekeeping pointed in the other direction. What I will say, with some confidence, is this: you can’t produce reliably good code without understanding what the code does. That’s not an identity claim. It’s a quality claim. It’s a claim the commenter half-heartedly concedes. The code will be worse, the debugging will be harder, and the maintenance will be fragile. The process matters because the output depends on it — not because it determines who gets to call themselves a developer.

But here’s where I have to be honest about something uncomfortable: from the outside, this person cannot tell the difference between what I did and vibe coding. And that is a real problem.

The code is in a repo. The commits are there. The tests are there. The documentation is there. But a stranger scrolling through r/audiobookshelf, who has spent months watching low-quality AI projects flood their community, is not going to read 97 commit messages and run the test suite before forming an opinion. They’re going to see the patterns they’ve learned to recognize — AI-style code structure, a non-developer author, a polished README that looks like it could have been generated — and they’re going to apply their heuristic.

Their heuristic is wrong about me. But it’s not wrong about the landscape. That’s the uncomfortable part.

The same community, in the same period, has seen projects where documented features didn’t work, where responses to user criticism read like they were generated in the same session as the code, and where the gap between what was promised and what was shipped was obvious to anyone who tried to use the tool — with the community expected to do the quality assessment the developer skipped. Those projects got constructive engagement. Mine got “prompting, not programming.”

Same community. Same tools. Different process. Indistinguishable reception. The heuristic can’t tell the difference because the heuristic doesn’t look.

And there’s something more insidious worth naming: the assumption that a polished README or a well-written response equals AI generation. “Even that comment is AI generated. Wow.” If you write clearly, you must be a machine. This is a bias that punishes anyone whose communication skills don’t match the commenter’s expectations for their demographic — non-native English speakers, neurodivergent communicators, people who happen to write well. It’s an equity issue hiding inside a technical critique, and nobody is talking about it.

The visibility gap — the inability to distinguish disciplined AI-assisted development from prompt-and-ship — is the central problem of this moment in software. It vilifies the people who do the work and protects the people who don’t. A vibe coder who ships a broken app and a practitioner who ships a tested, well-architected tool look the same from the outside until someone actually reads the code. And the dirty secret of open source software: almost nobody ever reads the code.

This isn’t a fringe observation. Anthropic’s own 2026 Agentic Coding Trends Report found that developers now use AI in roughly 60% of their work — but fully delegate only 0-20% of tasks. The rest requires active human judgment, verification, and oversight. The collaborative model isn’t an outlier. It’s the direction. The binary the commenter used — programmer or prompter — is a framework the field is already outgrowing.

The instrument we actually need already exists — it’s called judgment. The willingness to look at work carefully, assess its quality, and form an opinion based on evidence rather than pattern-matching. Aristotle said of quality: it’s not an act but a habit. We’re out of the habit.

The binary — human OR machine, developer OR prompter, real OR fake — is doing real damage. It is a cultural refusal to sit with nuance. It collapses a spectrum into a line. On one end, the person who types every character by hand. On the other, the person who pastes a prompt and ships. Everything in between — the messy, fecund territory where humans and AI systems collaborate with varying degrees of skill, judgment, and discipline — is invisible.

This is an audiobook tool. The worst thing that happens if the code is bad is someone’s chapters are wrong. But the same refusal to discern nuance and quality applies to medical software, financial systems, infrastructure. The stakes aren’t always this low.

I’ve been writing about this territory. I’ve been building a methodology for working in it. And last week I discovered that the territory is invisible even when you’re standing in it waving your arms.

I’m not going to attack the commenter because they were mean to me. They’re a good community member doing real work — answering questions, helping people debug their setups, maintaining the ecosystem they care about. Their frustration with AI slop is legitimate. Their pattern-matching failed on one specific project, and rather than reading the code, they doubled down. That’s human. I’ve done it too.

What I want is not an apology. What I want is for the fundamental question to change.

Not “was this made by AI?” but “is it intentional, is it well-built, and does it make things better?”

Not “are you a developer?” but “did you develop this?”

Not “prompting or programming?” but “what was the process, and what did the human actually contribute?”

These are harder questions. They require more than a glance at a repo. They require engaging with the work rather than classifying it. And right now, in a world flooded with genuine slop, I understand why people don’t want to do that work. It’s easier to sort into bins.

But the bins are wrong. And the cost of the wrong bins is that people who are doing something genuinely new — building real software through disciplined human-AI collaboration, with structured process and honest self-assessment and every decision documented — get sorted into the same bin as the people who prompted their way to a broken app and moved on.

The code is public. The methodology is public. The process is documented. The commits tell the story. I’m inviting anyone who cares to look.

m4Bookmaker: github.com/sageframe-no-kaji/m4bmaker · m4bookmaker.sageframe.net

The Ho System: atmarcus.net/work/ho-system

The companion essays on distributed cognition and authorship: The Bootstrapper’s Catch-22 · Thinking Outside the Skull

The thinking was distributed. The responsibility was not.

And the code works. You can check.

The companion piece to this essay, Bad Vibes →, asks what happens when the same pattern — deploying capability without process — plays out at Amazon, in healthcare, and at scale. It's the systemic version. This was the personal one.

— ATM