I Built a Computer Vision System for Harvard's Falcon Cameras — Without Knowing How to Code. Here's the Methodology That Made It Possible.

And why "vibe coding" is a worse problem than most people realize.

Some time ago, I sat on a plane next to a Harvard economics professor. We got to talking, and at some point she started describing the peregrine falcons nesting at Harvard’s Memorial Hall — how they hunted, how they returned, how little anyone had systematically documented about their presence. She was genuinely lit up about it. Not performing enthusiasm. Just captivated by something outside her own discipline. And she kept checking the YouTube feed for action.

That’s the kind of thing that gets me going. When someone with a sharp and curious mind is fascinated by something, I can’t help but drop into it with them — feel into what they’re seeing, start asking questions, start thinking about what it would take to actually build something around it. It’s how I’ve always worked. I get enthusiastic about diving into something I don’t know much about, and amplifying the cross-disciplinary passion of others.

By the time we landed, I had committed to building a real-time wildlife monitoring system — a production computer vision pipeline that would identify and track birds using camera feeds, process observations through AI classification models, and serve data to researchers. I built it around Harvard’s Faculty of Arts and Sciences falcon camera, and I’m now in conversation with researchers there and at other institutions about expanding the work.

I am not a software engineer. I have never been a software engineer. My training is in English literature, biology, and architecture (Oberlin, then MIT M.Arch). My career has been in organizational leadership, systems design, and education. I can code well enough to fix a webpage or program a simple robot, but I had never built a full-stack application, never deployed a production system, never configured a database or written an API endpoint.

Six weeks later, the system — called Kanyō (感応, “contemplating falcons”) — was live. Not a prototype. Not a demo. A working, deployed system processing real camera feeds, with researchers from multiple institutions already engaged.

I did not do this through heroic self-teaching. I did not grind through tutorials for months. I did it by developing a methodology for working with AI as a structured collaborator — a methodology I now call the Ho Process (歩, “step”).

This post is about what that methodology is, why it matters, and what it reveals about a much larger problem with how people are currently using AI to build things.

The Vibe Coding Problem

There’s a term that entered the discourse last year, courtesy of Andrej Karpathy: vibe coding. The idea is simple — you describe what you want to an AI, it generates code, you run it, and if it works, you move on. No need to understand the internals. Just vibe with it.

It sounds great. It is also, by emerging evidence, a trap — and a weirdly seductive one, because it feels like it’s working right up until the moment everything falls apart.

A study by METR (Model Evaluation and Threat Research) released last year found something remarkable: experienced developers using AI coding tools believed they were 24% faster. They were actually 19% slower. And here’s the part that should concern everyone — after being shown their own data, most participants still believed the tools had helped them.

The tools created a subjective experience of productivity that was measurably false — and that false belief survived contact with the evidence.

To be clear: the problem isn’t AI-assisted development. I built a production system with it. The problem is that how you use AI matters enormously, and the default mode — generate, accept, move on — actively degrades both what you build and your ability to understand it.

CodeRabbit’s analysis of hundreds of GitHub pull requests found that AI co-authored code produces 1.7 times more issues than human-written code. GitClear’s research across 211 million lines of code showed an eightfold increase in duplicated code blocks and a sharp decline in refactoring — clear signatures of compounding technical debt. The pattern is consistent: AI tools increase the speed of text generation while decreasing the quality of architectural decisions.

Vibe coding produces code. What it doesn’t produce is understanding. And without understanding, your system becomes a black box you built yourself — opaque, fragile, and unmaintainable. Which is a strange outcome for technology that’s supposed to make us more capable.

What the Ho Process Actually Is

The Ho Process starts from a different premise: AI is an implementation partner, not an oracle. The human maintains architectural authority — the vision, the constraints, the decisions about why something should work a certain way. The AI handles implementation detail — the syntax, the boilerplate, the domain-specific patterns you haven’t memorized.

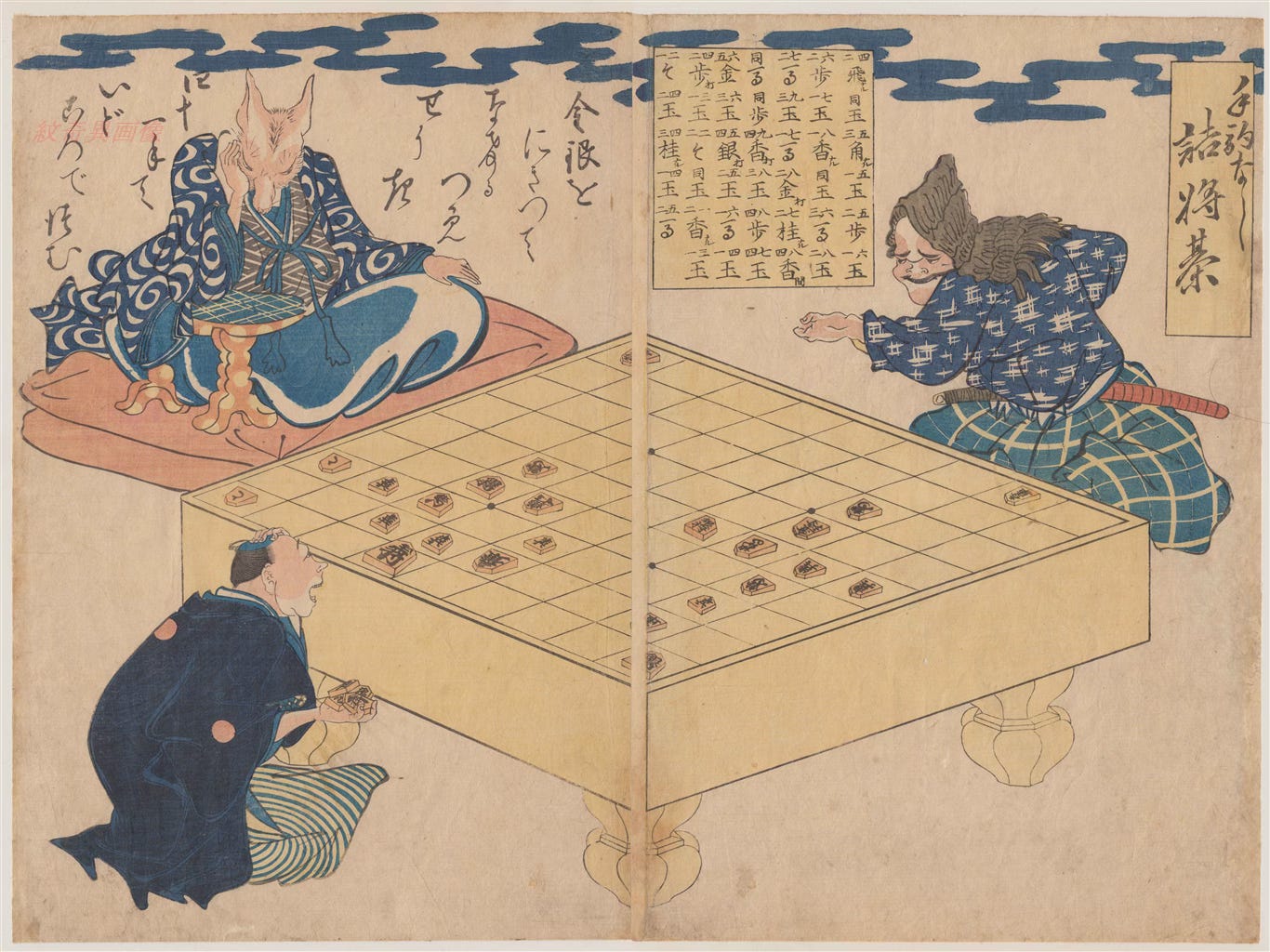

The name comes from the Japanese 歩 (ho), meaning “step.” It’s the most basic piece in shōgi (Japanese chess) — small, forward-moving, patient. But when a ho reaches the far side of the board, it promotes to gold, gaining the power of a much stronger piece. Small, disciplined steps compound into capability that transcends the starting position.

In practice, the methodology has a few core principles:

The human decides what to build and why. Before any code is generated, there’s an architectural conversation. What’s the system supposed to do? What are the constraints? What are the interfaces? This is systems thinking, not programming — and it’s where experienced professionals (even non-developers) bring enormous value.

AI generates implementation, but the human must achieve functional understanding. You don’t need to be able to write every line from memory. But you need to understand what the code does, why it’s structured that way, and what would break if you changed it. I call this “functional literacy” — the ability to read the system as a coherent whole, not a black box.

Every session ends with reflection. What did I learn? What do I now understand that I didn’t before? Where am I still faking it? This isn’t self-help journaling — it’s how you convert each session from “getting something done” into actually getting better.

Complexity is introduced incrementally, not all at once. The methodology explicitly rejects the “generate the whole thing and hope it works” approach. You build in layers, verify understanding at each layer, and only move forward when the current layer is solid.

Why This Isn’t Just a Coding Trick

The theoretical foundations of the Ho Process draw on work that predates AI entirely — Seymour Papert’s constructionism (you learn by building), Vygotsky’s zone of proximal development (learning happens at the edge of current capability with appropriate support), Donald Schön’s reflective practice (professionals improve by examining their own process).

What AI does is radically reshape the economics of these frameworks. Previously, having a knowledgeable partner available for real-time, patient, infinitely customizable scaffolding required... a person. A very available, very patient person. That was the bottleneck.

AI blows that bottleneck wide open. But only if you structure the collaboration correctly. Without structure, AI becomes what Papert would have called an “instructionist” tool — it gives you answers instead of helping you construct understanding. The whole point of the Ho Process is keeping the collaboration on the right side of that line.

There’s also a genuine inversion of the traditional cognitive apprenticeship model that I keep coming back to. In classic apprenticeship, the expert performs and the novice observes, gradually taking over. In Ho Process work, the AI “performs” (generates code) while the human directs and interrogates. The novice has architectural authority from the start. That’s weird. It’s also, I think, enormously important for who gets to build things and who doesn’t.

What This Means Beyond My Specific Story

I built Kanyō because a conversation on a plane caught fire in my head. But the broader point is about access and capability.

There are a lot of people out there — experienced professionals with deep domain knowledge, strong systems thinking, real organizational judgment — who are locked out of technical building because they don’t have formal engineering training. The default response is either “learn to code” (a multi-year commitment that ignores the value they already bring) or “just use AI” (which, as we’ve seen, produces fragile outputs without understanding).

The Ho Process is a third path: structured collaboration that leverages what you already know while building genuine — if targeted — technical understanding. You don’t need a CS degree. You also can’t just hand the wheel to the AI and hope for the best. There’s something in the middle that actually works, and I think we’ve been weirdly slow to figure out what it looks like.

I’ve written a full position paper laying out the evidence, the theoretical framework, and the open questions. It’s on GitHub, and it’s meant to be read, challenged, and built upon. If you’re interested in the methodology, the research base, or the practical framework, that’s where to go:

→ The Ho Process: Position Paper and Evidence Base

In the next post, I’ll tell the full story of building Kanyō — what the system does, the six-week timeline, the failures, and what it actually feels like to deploy something real when you’re working at the edge of your own competence.

For now: if you’re using AI to build things, pay attention to whether you understand what you’re building. The feeling of productivity is not the same as productivity. That gap is where the real damage happens, and almost nobody is talking about it honestly.

Andrew T. Marcus is a systems designer, organizational strategist, and independent consultant. He studied English literature and biology at Oberlin and holds an M.Arch from MIT. He has spent 25 years building and leading at the intersection of technology, learning, and organizational change. He is the designer of the Ho Process and the builder of Kanyō.